[ad_1]

Traditional impact evaluations tend to measure change in pre-specified outcomes. Let’s say that 10 people attend a cycling training course; a traditional impact evaluation might measure anticipated outcomes, such as people’s confidence in cycling, at the beginning and end of the course. But what happens if a few people went on to set up a cycling club or start lobbying for safer roads where they live? These are just two examples of unanticipated impacts that could lead to further societal benefits or more sustainable effects. So shouldn’t we find a way to capture wider impacts of interventions in a meaningful way? Over the last few years, Dr James Noble, University of Bristol, and Dr Jennifer Hall, Bradford Institute for Health Research, have adapted a method called Ripple Effects Mapping to do this.

A participatory method

Ripple Effects Mapping, or REM, is a participatory and qualitative form of impact evaluation. When we learned of this method, we thought that it would be invaluable in helping understand some of the complex public health interventions we were evaluating – particularly the elements that were deemed hard-to-measure. However, to make it more suited to our needs, we adapted it further. It is this adapted method that we talk more about here.

One fundamental aspect of REM is that it is participatory. This means that the people who are involved in the design or delivery of an intervention, and those who are affected by it, are invited to participate in data collection workshops. These workshops are qualitative in nature, and so we aim to build up a collective picture of what happened during the life-course of an intervention.

Running a REM workshop

Workshops are usually split into five main sections. The first section gives everyone time to speak with each other; to discuss how they have been involved in the intervention, what they are proud of, and what impacts have been important to them. This is predominantly a warm-up activity.

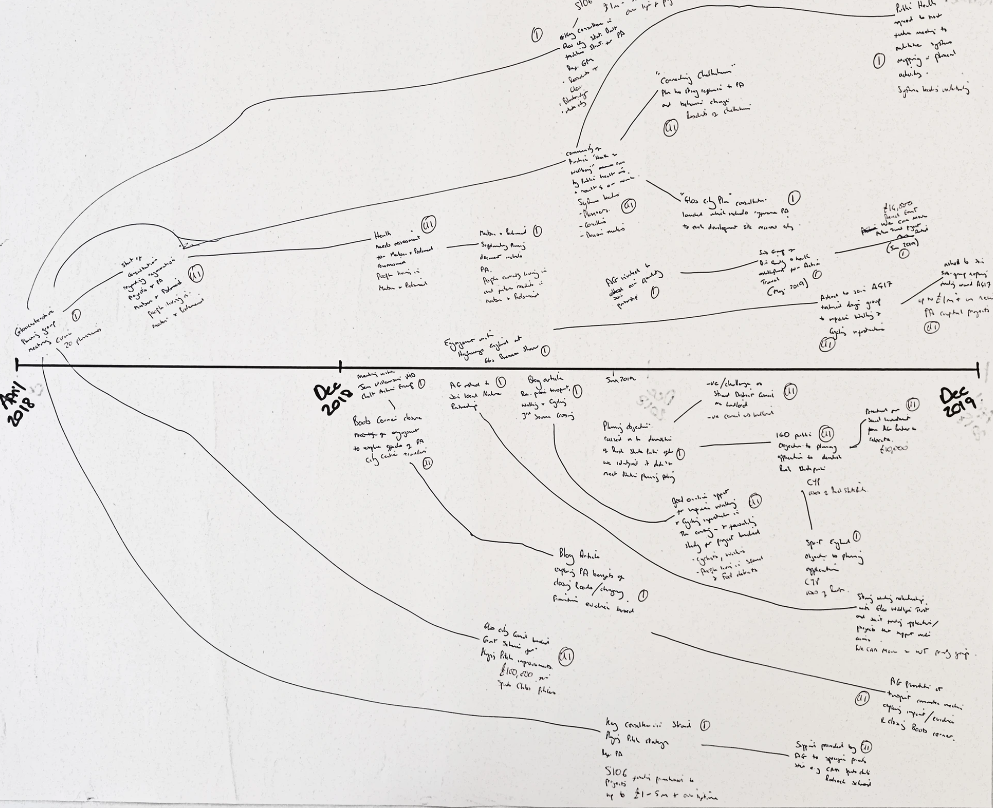

The second and third sections are perhaps the most important. Small groups of 4-6 people are formed, and groups are asked to start writing down and discussing what they were involved in and what the impacts have been. They capture this on flip chart paper which has a timeline in its centre (e.g. Figure 1). A facilitator can guide participants through the activity by asking probing questions. They also make sure that everyone has an equal opportunity to contribute to the activities.

One of the adaptations we made was that REM is viewed as both a retrospective and prospective method. In the workshops, participants can map their activities and impacts over the previous 3-6 months, and they can also anticipate what they envisage will happen over the next 3-6 months. Follow up workshops can then be carried out 3-6 months later where they revisit the previous REM outputs and ask, “Did what we think was going to happen, happen?” We also recommend that the first REM workshop is delivered in the first 3-6 months of their work.

The fourth section is a reflective one. Now that activities and impacts are visualised, the facilitator can ask participants to reflect on what they deem to be the most significant and why. But often, a more interesting conversation happens around the least significant impacts. Where did they invest time and energy which didn’t lead to anything meaningful? Why did this happen? What could be done in the future to minimise this happening again? Were other factors at play which prevented their work being impactful? Some of this can also be added to the map, or it could be audio-recorded and analysed later.

The last section of the workshop is also reflective. REM is not only an evaluative tool but also one which can drive continuous intervention improvement. So here, the facilitator can get the group to discuss what they will do next because of attending the REM workshop. It might be that some participants didn’t previously know of each other, and merely being in the workshop created the opportunity for new collaborations.

Workshops usually require a couple of hours. If you have less time, then elements of the workshop can be shortened. The follow up workshops can be shorter too as participants become more familiar with the method. We’ve tested the adapted method in online and in-person formats. Both can work, and each has pros and cons, but the in-person format probably allows for greater levels of participation and discussion.

Further information

Within the paper and our supplementary online training, we provide further details on how to apply the method, the circumstances in which it may be useful, and perhaps most importantly, we encourage others to adapt the method to meet their own needs. Hopefully, this method, and future iterations of it, offer one means of capturing the realities of efforts to improve public health.

Figure 1: Example REM map

[ad_2]

Source link